Articles

Institutional Fundraising in Alternatives: Solving the Consultant Data Bottleneck

Institutional Fundraising in Alternatives: Solving the Consultant Data Bottleneck

Private credit has expanded aggressively, outperforming many alternative strategies, supported by higher-for-longer rates and strong institutional appetite. However, rising leverage, borrower stress, and refinancing risk are beginning to test the durability of returns. The same growth has revealed widening dispersion in underwriting quality, covenant protection, and loss performance making manager and deal selection increasingly critical.

Private equity, however, is adjusting to a slower exit and liquidity environment. Private equity’s slowdown has shifted consultant focus toward realized value creation and liquidity outcomes rather than forward-looking narratives.

Real estate now shows uneven risk and returns outcomes by sector, infrastructure continues to grow on the back of energy transition and digital demand, and litigation finance is attracting institutional capital.

As a result, return drivers across alternatives have become more idiosyncratic, operational, and risk-specific, requiring each asset class to demand its own data logic.

In this fragmented and differentiated landscape, institutional consultants have shifted from passive evaluators to data-driven adjudicators- requiring managers to deliver asset-class-specific data that is structured, comparable, and consistently updated. These updates need to occur across all consultant databases, all market-data platforms as well as diligence materials. Expectations now go beyond telling a strong story. Consultants expect data to be accurate, consistent across documents and platforms, and able to clearly demonstrate how the manager’s investment process and risk controls actually work. Also importantly, they expect it to be updated regularly.

From Institutional allocator’s perspective, two digital gatekeepers: 1) the consultant databases (Albourne, Aksia, Callan, etc) - that determine who gets screened/shortlisted, and 2) the market- intelligence platforms (Preqin, Pitchbook, etc.) that determine who is discoverable and comparable – are considered important evaluation tools long before scheduling a meeting. Both collectively form the real front door to institutional capital — and not the pitch deck or the management meeting.

As the universe of alternative managers expands, (esp. private credit & real assets), consultants are placing greater reliance on structured data for screening and comparative analysis - making accurate, up-to-date database profiles a critical component of fundraising visibility. (Source: Preqin and PitchBook Databases)

This leads to manager’s data as the first impression in consultant-driven allocation decisions. This is why modernizing consultant data processes is not operational housekeeping; it is strategic infrastructure for fundraising success.

If data such as AUM, headcount, performance, or strategy description differ on portals, the firm deck and the latest DDQ, it could trigger concerns around operational discipline and internal controls. For many GPs, this mismatch is not a reflection of their investment process – but simply the result of fragmented data ownership and outdated workflows.

Most firms still rely on manual workflows because critical data is spread across investor letters, decks, spreadsheets, and filings, that were never designed to operate as a system. IR teams spend disproportionate time reconciling versions, reformatting content, and manually updating multiple platforms. Ownership is fragmented across IR, Product, Operations, and Compliance teams, leaving portal updates as a last-mile scramble rather than a controlled process. The result is a slow & reactive workflow.

Inside most alternative asset managers, these platform updates are handled across IR analysts, product specialists, junior operations staff, and compliance reviewers. These teams are designed for relationship management, investment storytelling, operational support, or content review, not data engineering. However, this work requires extracting, structuring, mapping, and reconciling data across platforms. This misalignment leaves the process fundamentally unstandardized, driving inefficiency and inconsistency at the core.

Most asset managers still treat this data reporting as an operational afterthought as senior management often underestimates its impact. Despite its direct influence on fundraising outcomes, it is still treated as a back-office task rather than strategic infrastructure. Firms invest heavily in CRM systems, investor presentations, and data rooms, while the systems that determine consultant visibility and screening remain underfunded and understaffed.

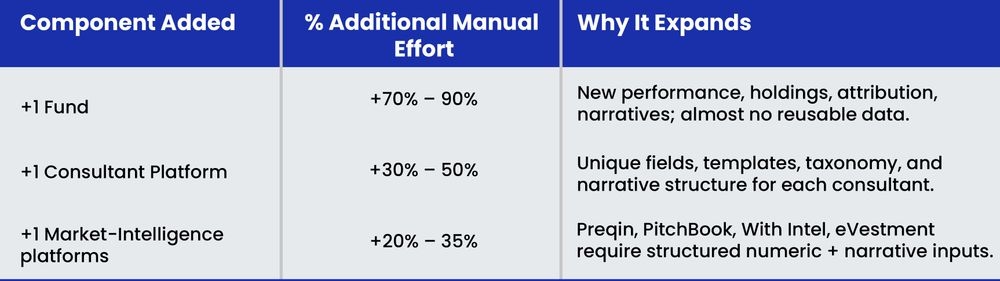

Taking all into consideration - with every additional fund or every new platform, the workload grows disproportionately. The following model shows the true compounding load inside a manual system.

% Additional Effort vs. Baseline (First Fund + First Platform)

Even a mid-sized manager with 3–5 funds across 5 consultant platforms and 2–3 Market-Intelligence platforms faces a workload that is 8–15x the baseline effort — all from manual uplift

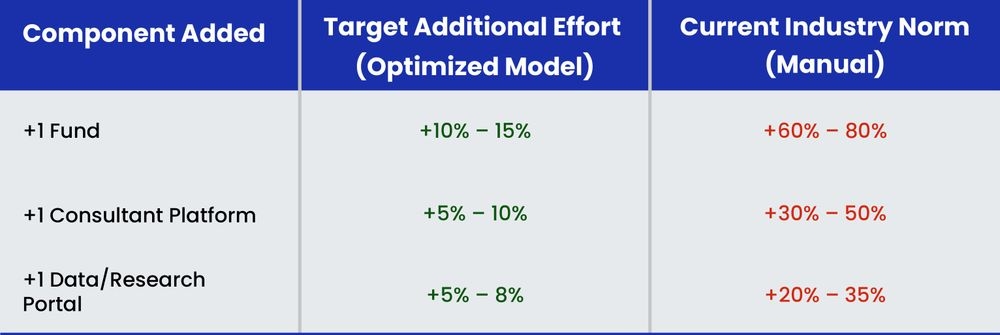

This demonstrates a ~88% total efficiency gain from baseline when moving from manual processes to an AI-driven model, while also improving consistency, version control, and turnaround time

Meanwhile, the consequences are immediate and visible to consultants:

In the target operating model, growth becomes scalable - new funds or platforms no longer multiply workload; they add only marginal effort.

Asset managers should create a single, comprehensive internal data framework that captures every unique field requested across consultant databases, DDQs, RFPs, ESG questionnaires, market-data portals, and LP reporting. All information, performance, strategy descriptions, AUM, headcount, risk, ESG, compliance, governance, should flow into this one structure eliminating duplication and creating a single source of truth, centralizing ownership, and ensuring that any update enriches the entire institution’s data backbone.

The internal framework must be supported by a clean data-flow architecture where information is sourced once from:

Each owner contributes only once per cycle, with the data automatically applied across consultant & market-data requirements. This replaces the current email-driven, document-heavy process and ensures consistency across all disclosures.

AI/LLMs should be used to pull structured fields from source documents, generate draft narratives, refine language, and tune responses to compliance standards. This significantly reduces manual effort, speeds up updates, prevents repetitive work, and creates consistent language across all consultant and data-provider platforms. Its role is critical in achieving scale and reducing operational cost.

The internal consultant-data template should map cleanly into the unique formats required by each consultant and data platform. This mapping ensures that once data is updated internally, generating platform-ready outputs becomes a low-effort, repeatable process. Incremental work for new funds or new platforms remains minimal, enabling scalable multi-strategy reporting.

A unified data framework, supported by AI and a structured workflow, gives asset managers a cleaner and more scalable way to manage consultant and market-data updates. It reduces duplication, lowers manual effort for new funds and platforms, and significantly cuts down errors and inconsistencies.

Crucially, it allows asset-class-specific data requirements, whether underwriting detail, realization metrics, asset-level KPIs, long-duration risk factors, or case-level disclosures, to be managed within a single, consistent architecture.

With one shared reference point across teams, updates become faster, leaner, and more reliable - strengthening consultant visibility and improving overall fundraising efficiency.

Consultant data refers to structured information submitted by asset managers across consultant databases and market platforms, used for screening, comparison, and evaluation.

It determines visibility and shortlisting before investor meetings. Inaccurate or inconsistent data can reduce credibility and delay fundraising outcomes.

Fragmented data sources, manual workflows, inconsistent reporting across platforms, and lack of standardized data architecture.

By implementing a unified data framework, structured workflows, and automated validation processes across all reporting platforms.

AI enables automated data extraction, standardization, and mapping across platforms, reducing manual effort and improving accuracy at scale.